Why Most Teams Look at Data but Don’t Actually Use It

Every team says they are data-driven.

They have dashboards. Weekly reports. Monthly reviews. Charts in slides. Metrics in meetings. Numbers everywhere.

Performance still stalls.

The problem is not access to data. The problem is what happens after people see it.

A report from Gartner found that over 70 percent of marketing decisions are still made without effectively using available data. That gap explains a lot. Teams are not lacking information. They are lacking action.

Data Gets Treated Like a Report Card

Most teams use data to explain what has already happened.

Traffic increased.

Engagement dropped.

Conversion stayed flat.

The report gets shared. The meeting ends. Nothing changes.

Data becomes a summary. Not a tool.

In one campaign review, a team presented strong engagement numbers. Clicks were up. Views were steady. The conversation ended there.

“What are we doing differently next week?” someone asked.

Silence.

They had data. They had no decision tied to it.

That is the pattern. Data gets reviewed. It does not get used.

Teams Focus on the Wrong Questions

Looking at data is easy. Asking the right questions is harder.

Most teams ask surface-level questions.

How many clicks did we get?

How many views did we reach?

These questions produce numbers. They do not produce insight.

Better questions sound different.

Where are people dropping off?

What part of the message is unclear?

What expectation did we set that we didn’t meet?

Those questions lead to action.

In one campaign, a team noticed a drop in conversions. The initial assumption was targeting. The real issue showed up deeper. Users were leaving after landing on the page.

“We were looking at the top of the funnel because that’s what the dashboard showed first,” a strategist said. “The problem was happening further down.”

The wrong question delayed the right fix.

Too Much Data Creates Paralysis

More data should create clarity. It often creates confusion.

Teams track everything.

Clicks.

Views.

Time on page.

Bounce rate.

Scroll depth.

Each metric moves differently. Each suggests a different action.

Decision-making slows.

A study from the Journal of Behavioral Decision Making found that excessive information reduces decision quality. More options create hesitation.

In practice, this looks like endless analysis.

Teams debate numbers.

They compare trends.

They wait for certainty.

Certainty does not arrive.

Nothing changes.

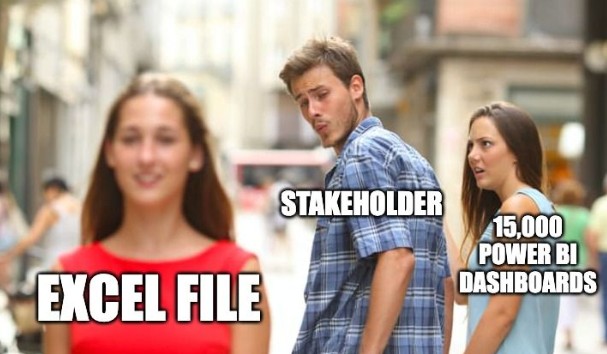

No Clear Ownership of Decisions

Data requires interpretation. Interpretation requires ownership.

Many teams lack clear responsibility.

Who decides what the data means?

Who decides what action to take?

Without answers, data sits in shared spaces.

In one campaign, multiple stakeholders reviewed the same report. Each had a different interpretation. No one made a final decision.

The campaign continued unchanged.

“Everyone agreed something was off,” one team member said. “No one owned the fix.”

Ownership turns insight into action.

Data Feels Safer Than Action

Looking at data feels productive.

It creates the sense of progress without risk.

Action carries risk.

Changing a campaign might fail.

Adjusting messaging might backfire.

Testing something new might not work.

Teams avoid that risk by staying in analysis mode.

Maryam Simpson described this pattern during a campaign discussion. “We had three weeks of reports showing the same drop in performance,” she said. “Everyone kept reviewing the numbers. No one wanted to change the campaign because it might make things worse.”

The campaign stayed the same. Results did not improve.

Data without action becomes a comfort zone.

Metrics Get Misinterpreted

Even when teams act, they often act on the wrong interpretation.

A high click-through rate suggests success. It might signal curiosity without conversion.

A long time on page suggests engagement. It might signal confusion.

A high bounce rate suggests failure. It might signal efficiency.

Without context, metrics mislead.

In one campaign, a team saw high engagement on a post. They replicated the format. Performance dropped.

The original success came from timing. Not format.

“We copied the output,” a strategist said. “We didn’t understand the cause.”

Data requires context to be useful.

Reporting Cycles Are Too Slow

Data arrives in cycles.

Weekly reports. Monthly summaries. Quarterly reviews.

By the time teams act, the opportunity has passed.

High-performing teams operate faster.

They review data in shorter cycles.

They test changes quickly.

They adjust in real time.

Speed turns data into an advantage.

Slow cycles turn it into history.

What Actually Works

Using data effectively requires a shift in approach.

Start with fewer metrics.

Choose three that matter.

Acquisition quality.

Engagement depth.

Conversion outcomes.

Ignore the rest.

This reduces noise.

Tie Every Metric to a Decision

Every number should lead to a question.

If engagement drops, what changes?

If conversions stall, what gets tested?

If there is no decision, the metric has no value.

In one campaign, a team created a simple rule.

Every report must include one action.

No action, no report.

Decisions improved.

Shorten the Feedback Loop

Speed matters.

Review data daily or weekly.

Test small changes immediately.

Measure results quickly.

This creates a cycle.

Observe.

Act.

Learn.

Repeat.

Short cycles increase learning.

Combine Data with Observation

Numbers show patterns. They do not explain them.

Observation fills the gap.

Watch user behavior.

Read feedback.

Talk to customers.

In one campaign, data showed users leaving a page quickly. The assumption was poor targeting. User feedback revealed confusion about pricing.

The page changed. Conversions improved.

The metric identified the issue. Observation solved it.

Create a Culture of Action

Data becomes useful when teams expect action.

Set clear ownership.

Define decision rules.

Reward testing.

Make action normal.

In one team, every metric review ended with a test plan. No exceptions.

Performance improved because learning increased.

The Takeaway

Most teams do not lack data. They lack decisions.

They review numbers. They discuss trends. They delay action.

The gap between insight and execution limits performance.

Maryam Simpson summarized it during a strategy session. “If the data doesn’t change what you do next, it’s just a report,” she said.

That line captures the problem.

Data matters. Action matters more.

Use data to decide. Not just to describe.

That shift turns information into results.